Ideally you would at most match the two, or use a lower resolution than your interval. The other way to look at it is the smaller your contour interval, the higher the resolution you can set for the DEM without introducing false accuracy or precision. One could say there is no direct relationship between the two in that changing one will not necessarily affect the other.

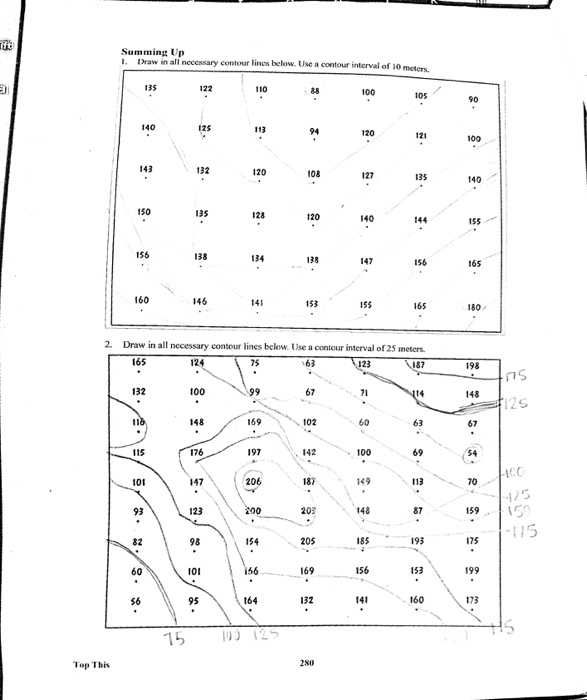

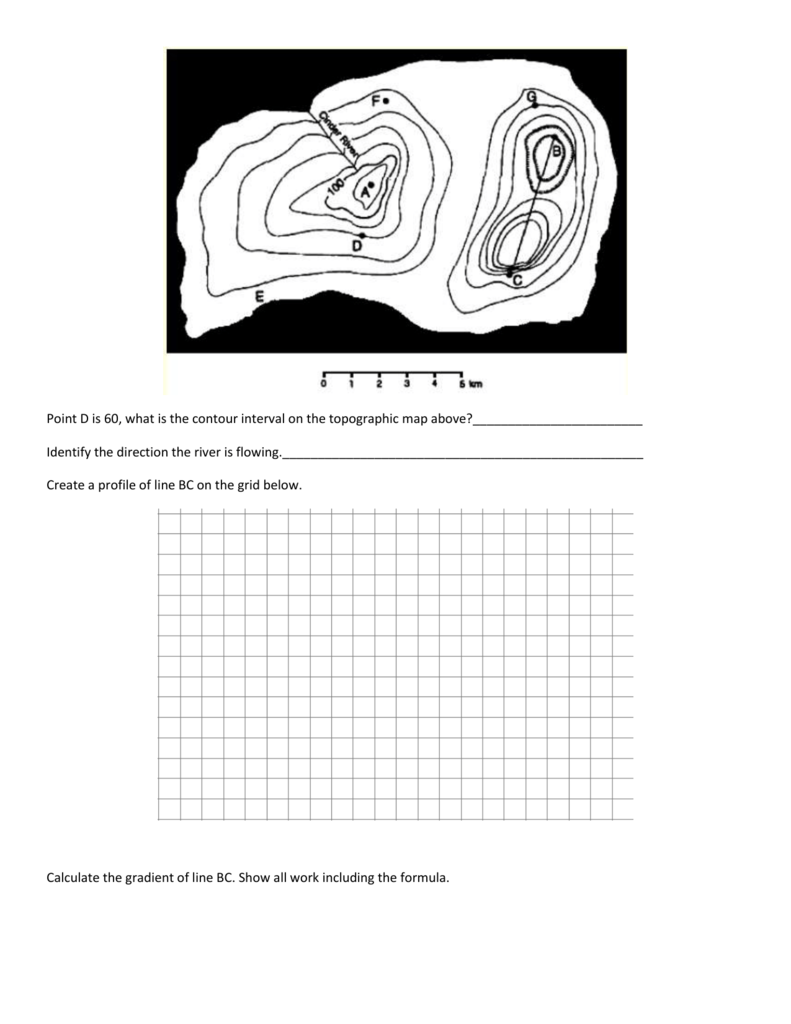

But the finer/higher resolution version won't truly be any more accurate than your source measurements. You can create a DEM with cells that are smaller than that or larger, and that store values to the nearest one meter or ten. This means your known values (the contour lines) are no closer than 100m horizontally or 10m vertically. Let's say you have a contour interval of 10m and your contours are uniformly 100m apart. See When dealing with rasters of varying resolutions should one resample to the highest or lowest resolution? for more explanation on how this is so. When you interpolate, you have the potential to introduce false accuracy into your data. In fact, since contours are typically produced via interpolation, you're re-interpolating data. If you are creating a DEM from contours, you are also interpolating (estimating unknown values from known). Horizontal you can control with the cell size in that each vertical measurement is assigned to an area. Vertical is controlled by the precision of the elevation value stored in the cell, and mostly relates to when you're actually measuring or have a stated source. Resolution is the minimum distinguishable distance between samples. There are two resolutions to a DEM - vertical and horizontal. Contour lines are an interpolation of continuous point samples (or a grid, which is what the DEM is). You can of course highlight 10m contours on an interval of 2 (every fifth) or 5 (every other). If it's 5, each line represents 5 vertical feet of change between lines. You can't have 10, 5, 2 unless you have different sets of contours. A small contour interval for a small area and a higher contour interval is used for a large area.Contour interval is a single number that defines the number of vertical units between contours. When find a large area is to be mapped onto a small piece of paper, the contour intervals are used in surveying. The contour interval would be 1000 / 5 = 200 or a 200 feet unit contour interval distance. The lines A and B are contour lines that show the low terrain or the features of lower heights and the elevation of Y is 446 meters and the point of W will be between the 100 to 300 like.

If, on the other hand, the difference between the elevation index lines were 1000 feet, A contour interval shows the highest and lowest point of the gradient and another example is of the isopleth that is line of the equal interval of pressure. The contour interval is equal to 2000 / 10 = 200 feet In the above example, the distance 2000 is divided by the number of lines, 10. Mark four points (two on the endpoints, and two between) and draw the contours. Divide 300 feet by 3 to get one contour every 100 feet. d 300 feet There are to be three contour lines on this interval. To find the interval between the contour lines of elevation,ĭivide the difference elevation between the index lines by contour lines from one index line to the next index line. Contour Map Generation Example: Contours are to be placed every ten feet. The Counting each contour line up to and the next index line with including. The vertical distance or difference in elevation between contour lines is known as a contour interval. World Maps generally count five contour lines from one index line to the next index line, with the next index line.Īs with counting from one number to the next number, But, first, start counting the contour lines from the first index line to the next index line, counting steps similarly by step. So how to calculate the contour interval becomes a useful skill for the contour line.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed